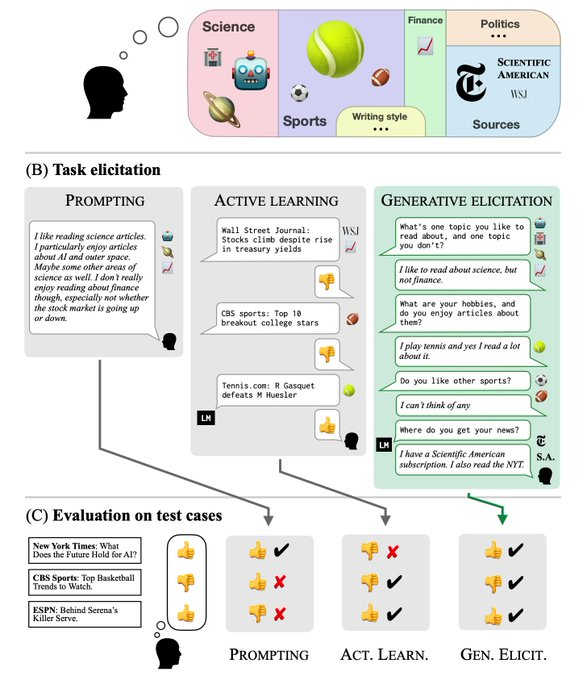

MIT researchers have developed a GATE framework that proactively engages in open-ended conversations with you to understand your needs and preferences through column conversations.

Once it understands your needs, GATE generates the appropriate prompts and then passes them to the LLMs. This allows the model to more accurately generate answers that match your needs.

It’s equivalent to helping you write a prompt…

This also means that those who engage in prompt training and develop prompt products are about to lose their jobs.

GATE Pros:

GATE understands user needs through conversations, so users don’t need to prepare a lot of information or think complicatedly in advance.

This interactive approach may also allow users to think about issues they haven’t considered before, giving them a more comprehensive understanding of their needs.

The core ideas of the GATE framework:

GATE (Generative Active Task Elicitation) is simply about helping users generate more effective prompts by actively engaging in conversations with them, thereby improving the accuracy and usability of LLMs.

Open-ended interaction: The model may ask open-ended questions such as “What type of music are you looking for?” Or “Do you have any particular opinions on this topic?” ”。

Edge Case Generation: The model may also generate special or edge cases for users to tag or comment on to gain a more accurate understanding of user preferences.

Working principle:

Core components of the GATE framework:

Open Interaction: The model engages in free-form, language-based interaction with users. This can be asking questions, generating examples, or any other form of language output.

User Feedback: Users provide feedback by responding to the model’s output, which is used to update the model’s understanding and predictions.

Model Updates: Based on the collected user feedback, the parameters or structure of the model are updated accordingly.

Workflow:

1. Initialization: The model begins with a basic understanding or preset task.

2. Interaction: The model generates one or more language-based outputs to guide users to provide more information.

3. Feedback Collection: Users respond to the model’s output, providing their perspectives, needs, or preferences.

4. Model update: Use the collected feedback to update the model.

5. Iteration: This process is repeated until the model can accurately understand and execute the user’s tasks.

Example:

Let’s explain how the GATE framework works through a specific use case:

User Needs: Users want to create an interesting game and request the GATE system to design it.

GATE Questions: The GATE system asks users which platform or type of game they consider when creating a game. For example, whether it is a mobile game, a PC game or an arcade game.

User response: Users say they are considering mobile gaming and particularly enjoy jigsaw puzzles.

Further questions from GATE: The GATE system asks users if they have considered the purpose and rules of the game, or if they need some ideas or suggestions.

Refinement of user needs: Users say they haven’t decided on specific game rules and want to hear some new concepts or suggestions.

GATE Suggestion: The GATE system suggests considering adding elements of time manipulation, such as allowing players to go back in time or pause time to solve puzzles.

User feedback: Users found the idea interesting and requested more details about the game.

Final Prompt: The GATE system generates a final prompt: “Design a jigsaw puzzle game for mobile devices in which players can solve various obstacles and reach goals through manipulation time.” ”

This case demonstrates how GATE can understand users’ specific needs through open dialogue with users and generate effective prompts accordingly, so that large-scale language models (LLMs) can more accurately meet user needs.

GitHub:https://github.com/alextamkin/generative-elicitation

Thesis: https://arxiv.org/abs/2310.11589