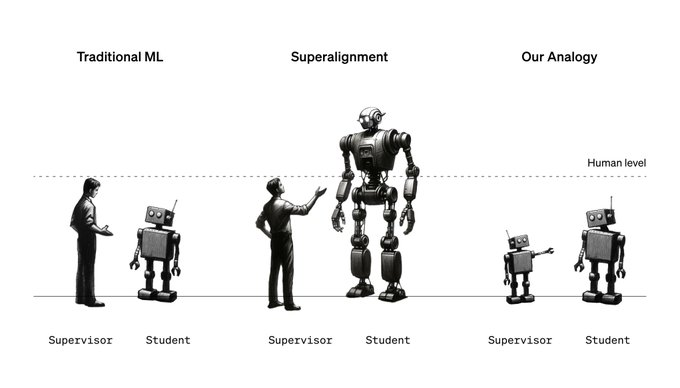

How to use less capable AI models to guide and control more powerful AI models.

The purpose of this research is to solve the question: in the future, when AI becomes smarter than humans, how can humans effectively control these AIs.

The results show that even relatively weak AI models can effectively guide and influence the training and behavior of more advanced AI models to a certain extent. For example, using GPT-2, an earlier AI model, to help train GPT-4, a more advanced AI model.

1. The concept of weak-to-strong generalization: This study explores the concept of “weak-to-strong generalization,” which is the use of weaker AI models to supervise and guide stronger AI models.

Here, “weak” and “strong” refer to the model’s capabilities or complexity. Weaker models are usually early-developed models with limited capabilities, while stronger models are more advanced and complex.

2. Experimental setup: In this study, OpenAI used GPT-2 as a weaker model to supervise GPT-4’s training. GPT-2 is an earlier AI language model, while GPT-4 is a more advanced, larger, and more sophisticated model.

With this setup, the researchers hope to understand whether a weaker model can effectively influence the learning and behavior of a stronger model.

The researchers used GPT-2’s output to communicate the task to GPT-4.

3. Research results: Experimental results show that this method has enabled GPT-4 to achieve a performance level between GPT-3 and GPT-4. This suggests that GPT-2 is able to guide GPT-4 to learn specific tasks or behaviors to some extent. GPT-4 can be brought to a performance level close to its full potential, even if GPT-2 is nowhere near as capable as GPT-4.

This means that a weaker AI model, such as GPT-2, can also have a significant impact on a more powerful AI model (such as GPT-4) when acting as a supervisor.

4. Research significance:

This finding has implications for AI alignment and control:

- Effectiveness of weak supervision: Typically, we believe that the supervisor of an AI model needs to be more powerful or at least equally powerful than the supervised model to ensure effective learning and control. However, this study shows that even less capable models can effectively guide more powerful models.

- Implications for Future AI Alignment: As AI technology evolves, AI systems far beyond human intelligence may emerge in the future. In this case, humans will be relatively weak overseers. This research offers a possible solution that even weak supervisors may effectively guide and control superintelligent AI.

- Security Management of Superhuman Intelligence: This research provides new ideas for how to safely manage and control superhuman intelligent AI. It suggests that with the right methods and techniques, we can expect to maintain effective control over advanced AI systems even when humans become weak overseers.

To initiate more research in the field, OpenAI has released open-source code and papers.

GitHub:https://github.com/openai/weak-to-strong

Paper: https://cdn.openai.com/papers/weak-to-strong-generalization.pdf

OpenAI has also launched a $10 million grant program to support a wide range of research on transhuman AI alignment, particularly related to weak to strong generalization. Application: https://openai.com/blog/superalignment-fast-grants