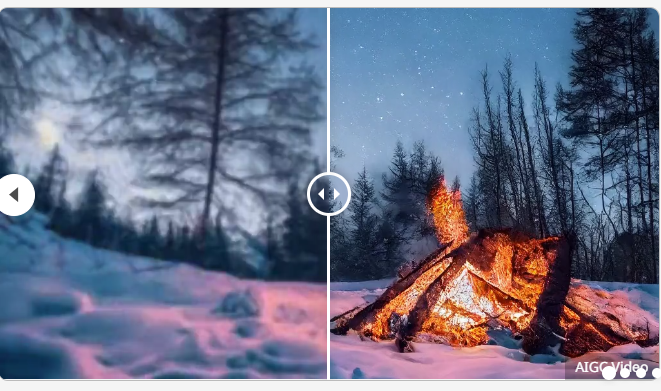

Video generation is really rolled up in all directions. Here’s a demo and introduction:

Introduction:

Upscale-A-Video’s Text-Guided Latent Diffusion Framework for video upscaling. The framework ensures temporal consistency through two key mechanisms: locally, it integrates the time layer into U-Net and VAE-Decoder, maintaining the consistency of short sequences;

Globally, a stream-guided recurring potential propagation module is introduced to enhance the overall video stability by propagating and merging latent throughout the sequence.

Thanks to the diffusion paradigm, the model also achieves a trade-off between fidelity and quality by allowing text prompts to guide texture creation and adjustable noise levels to balance recovery and generation.

Methods:

Advanced video handles long videos with local and global policies to maintain temporal coherence. It divides the video into segments and processes them using a U-Net with a time layer for consistency within the segments. In the user-specified global granular diffusion step, use the Circular Latent Propagation module to enhance fragment-to-fragment consistency. Finally, the fine-tuned VAE-Decoder reduces residual flicker artifacts for low-level consistency.

Results:

Extensive experiments have shown that Upscale-A-Video outperforms existing methods in synthetic and real-world benchmarks, as well as demonstrating impressive visual realism and temporal consistency in AI-generated videos.

Project address: https://shangchenzhou.com/projects/upscale-a-video/

Video address: