Twelve Labs has introduced Pegasus-1, an advanced video-language foundation model with approximately 80 billion parameters.

It can process video content from 10 seconds to hours of video content and understand, recognize, and parse videos to generate more comprehensive and accurate text descriptions.

It can comprehensively understand the people, objects, and scenes in the video, as well as background music, dialogue, and more.

Key features:

1. Multimodal understanding:

Pegasus-1 not only processes visual information from video, but also understands audio and speech information. This means that it can have a more comprehensive understanding of video content, including the people, objects, and scenes that appear in the video, as well as background music, dialogue, etc.

2. Efficient long video processing:

This model optimizes the ability to manage and process videos of varying lengths, from as short as 10 seconds to hours long.

3. Video-text generation:

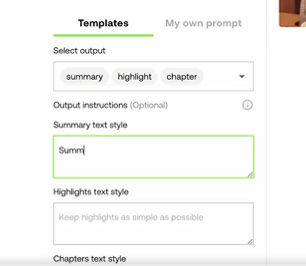

With a single API call, developers can prompt the Pegasus-1 model to generate specific text output from their video data. This includes but is not limited to video summarization, key point extraction, auto-generated tags and titles, etc.

4. Advanced performance indicators:

On the MSR-VTT dataset and the Video Descriptions dataset, Pegasus-1 showed a relative improvement of 61% and 47% relative to the state-of-the-art models available.

5. API Access:

Pegasus-1 provides a set of video-to-text APIs that are flexible and can be used for a variety of downstream tasks.

Details: https://app.twelvelabs.io/blog/introducing-pegasus-1

Working principle:

Unlike many methods that frame video understanding as an image or speech comprehension problem, Twelve Labs employs a “video-first” strategy.

This strategy has four core principles: efficient long-form video processing, multimodal understanding, video native embedding, and deep alignment between video and language embedding.

The model consists of three main components: a video encoder, a video-language alignment model, and a language decoder.

1. Video encoder: responsible for extracting visual, audio, and speech information from videos and generating video embeddings. It evaluates video frames and their temporal relationships to obtain relevant visual information while processing audio signals and speech information.

2. Video-language alignment model: This step is the key to bridging the field of video embedding and language models. It ensures that language models interpret video embeddings in a similar way to understanding text markup.

3. Language Decoder: Utilizing its extensive knowledge base, the decoder interprets aligned embeddings based on input user prompts and decodes this information into coherent, easy-to-read text.

These three components are trained together, allowing the model to understand and generate text related to video content more accurately.

Dataset:

Twelve Labs has collected over 300 million diverse, curated video-text pairs. This makes it one of the largest video-text corpora for video-language foundation model training.

Initial training subset: The technical report is based on an initial training run with 35 million video-text pairs and over 1 billion image-text pairs. This subset accounts for about 10% of the total dataset.

This dataset is not only large, but also high-quality and diverse, which helps Pegasus-1 achieve advanced performance on multiple evaluation metrics.

MSR-VTT dataset introduction: https://microsoft.com/en-us/research/wp-content/uploads/2016/06/cvpr16.msr-vtt.tmei_-1.pdf

ideo-ChatGPT video description dataset: https://arxiv.org/pdf/2306.05424

Original: https://twitter.com/i/status/1718456086150435074