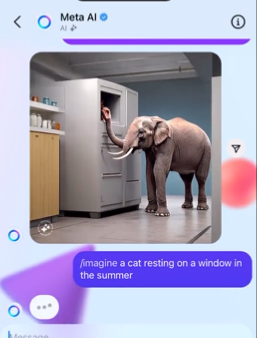

An advanced image generation model specifically designed to generate highly aesthetic images.

Emu is a new image generation model of Meta AI that can quickly and freely generate high-quality realistic images. The model was first pre-trained using 1.1 billion image-text pairs, and then fine-tuned using a select set of high-quality images to further enhance the visual appeal of the generated images.

In the end, the Emu model excels in visual appeal, outperforming other advanced image generation models.

Emu characteristics:

1. Combination of aesthetics and functionality: In general, pre-trained image generation models present challenges in generating highly aesthetically pleasing images. Emu solved this problem through later aesthetic alignment.

2. Efficient quality tuning: Surprisingly, only a few thousand selected high-quality images can significantly improve the quality of production. This means that no large amount of data and computing resources are needed.

3. Extensive application scenarios: The application scenarios for generating images from text are very wide, including but not limited to artistic creation, advertising design, game development, etc.

Technical details:

Emu is based on the Language Driven Model (LDM), a deep learning network capable of understanding text input and generating images based on that input.

1. Pre-training and fine-tuning: Emu used 1.1 billion image-text pairs for pre-training, and then used thousands of selected high-quality images for fine-tuning. This data is preprocessed so that models can better learn how to generate images from text.

2. Quality optimization: After the basic model training was completed, a series of fine-tuning operations were carried out. This includes using hundreds to thousands of specific images for quality tuning to increase the visual appeal of the generated images.

3. Multimodal training: Emu is not just a single model, it is also integrated with other types of generation models (such as pixel diffusion models and mask generation transformer models) to further improve the quality of generation.

Performance evaluation:

Compared with its pre-trained counterpart, Emu achieved a winning percentage of 82.9%. Compared to the state-of-the-art SDXLv1.0, Emu was preferred for visual appeal 68.4% and 71.3% of the time.

Emu performed well, not only having an advantage in generating high-quality images, but also performing quite well in terms of diversity and accuracy. This makes it a very promising tool that can be used in a variety of applications, from media and entertainment to scientific research and education.

Details: ai.meta.com/research/publications/emu-enhancing-image-generation-models-using-photogenic-needles-in-a-haystack/

Paper: scontent-xsp1-1.xx.fbcdn.net/v/t39.2365-6/1