SynthID: Identifying AI-generated content

SynthID uses various deep learning models and algorithms for watermark embedding and recognition. It helps users determine whether content is generated by Google’s AI tools without affecting the quality of the original content. It has been integrated into Google products such as Imagen, ImageFX, and Gemini. AI music generation is also supported through Lyria.

Users can identify whether an image was generated by Google AI tools through Google Search or the “About This Image” feature in Chrome. SynthID’s text watermarking technology has been published and open source in Nature, and is currently working with Hugging Face to make it more widely used on developer platforms.

Original text:https://deepmind.google/technologies/synthid/

Project name: VirtualWife

Project function: virtual digital person

Project Description: A virtual digital person project that creates a virtual companion with a “soul” that can interact with users and meet emotional needs.

Support live broadcast on Station B and integrate multiple large language models such as OpenAI and Olama. Users can use it on different operating systems through simple Docker deployment.

It has customized role settings, short-and long-term memory functions, text-driven expressions and actions, and the ability to conduct dialogue via voice.

https://github.com/yakami129/VirtualWife

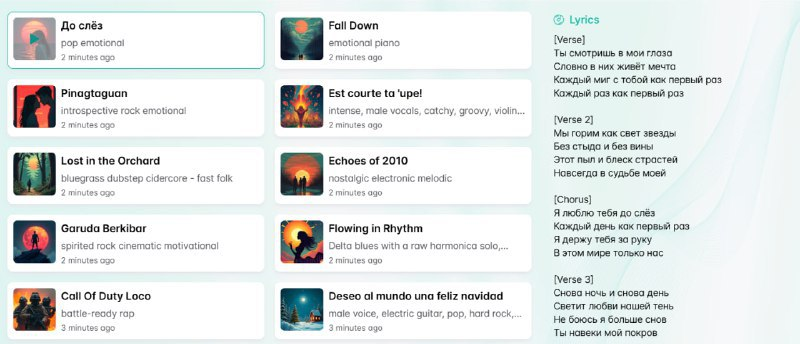

Name: Brev.ai

Website Function: AI Music

Website Introduction: An innovative online artificial intelligence music generator that can quickly create high-quality music by entering text descriptions.

Utilize advanced Suno V3.5 technology to transform users ‘words into melodies, harmonies, and even complete songs, suitable for various scenarios such as videos and social media.

Website:https://brev.ai/

Google releases SAIF risk assessment tool to help AI system security

Google has launched the SAIF risk assessment tool designed to help AI developers and organizations assess the security status of their AI systems. The tool is based on questionnaires and can generate customized risk lists and mitigation suggestions, covering risks such as data poisoning, prompt injection, and model source tampering. This tool is part of the Secure AI Framework (SAIF) and is aligned with the Coalition for Secure AI (CoSAI) AI risk governance workflow, committed to building a more secure AI ecosystem. Google has also updated the SAIF.Google website to provide more AI security information.

Original text:https://blog.google/technology/safety-security/google-ai-saif-risk-assessment/

Thank you for watching this video. If you like it, please subscribe and like it. thank

Oil tubing: