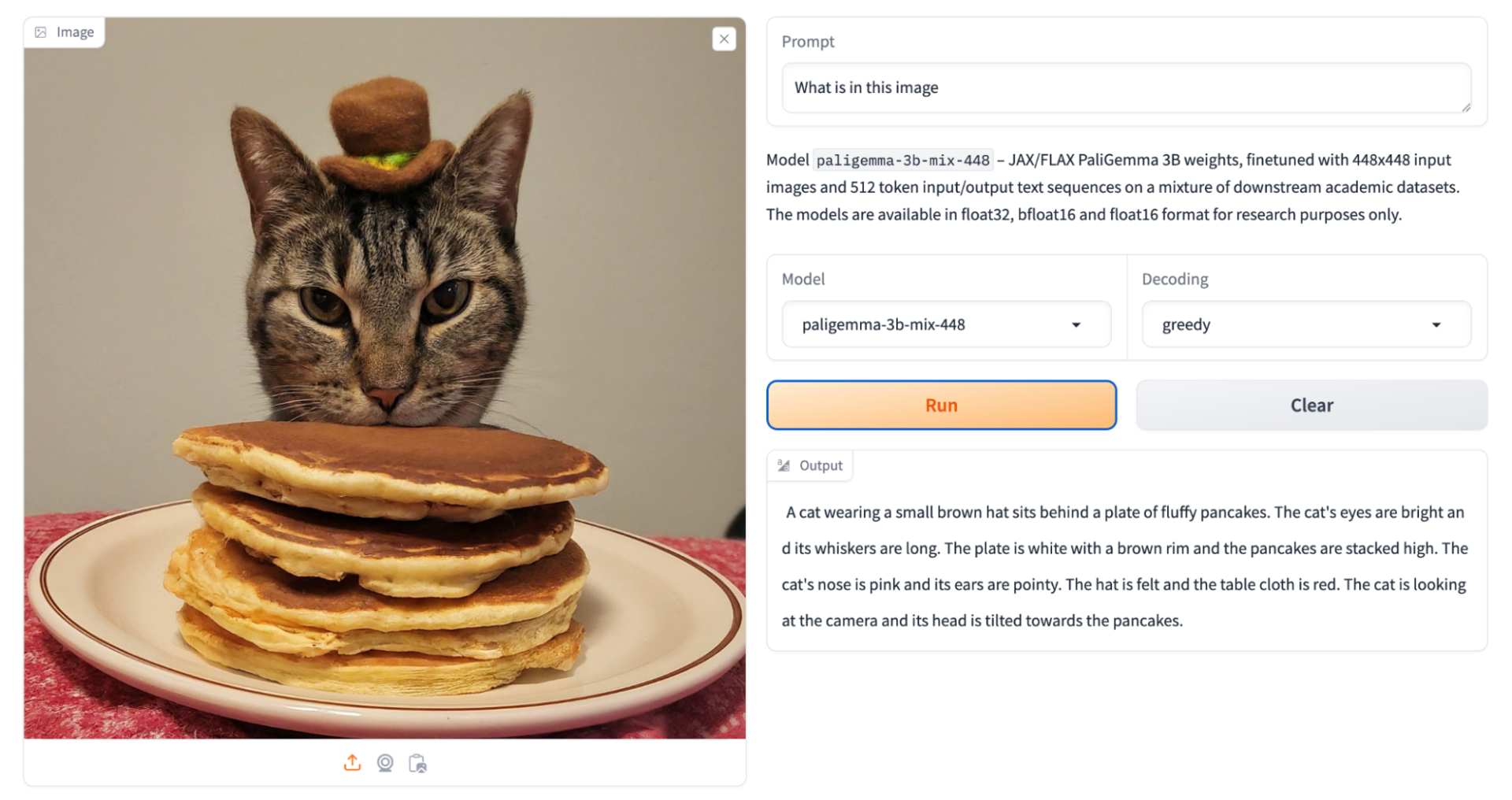

Supports multiple visual language tasks such as image and video

Including supporting tasks such as image and Short Video captioning, visual question and answer, image text understanding, object detection file chart interpretation, image segmentation and other tasks.

The PaliGemma model contains 3 billion (3B) parameters and combines the SigLiP visual encoder and the Gemma language model.

At Google, we believe in collaboration and open research driving innovation, and we are pleased to see Gemma becoming popular among the community, receiving millions of downloads in just a few months after its release.

This enthusiastic response has been very encouraging, as developers have created a variety of projects, from Navarasa (a multilingual variant of the Indian language) to Octopus v2 (an on-device operating model), and developers are demonstrating Gemma’s potential to create influential and easy-to-access projects.

This spirit of exploration and creativity has also driven us to develop CodeGemma (with powerful code completion and generation capabilities) and Recurrent Gemma (which provides efficient reasoning and research possibilities).

Gemma is a series of lightweight, state-of-the-art open models built using the same research and techniques used to create the Gemini model. Today, we are excited to further expand the Gemma series with the launch of PaliGemma, a powerful Open Visual Language Model (VLM), and preview the near future with the release of Gemma 2. In addition, we are further expanding our commitment to responsible artificial intelligence by updating the Responsible Generation AI toolkit to provide developers with new and enhanced tools to assess model security and filter harmful content.

Introduction to PaliGemma: Open Visual Language Model

PaliGemma is a powerful open VLM inspired by PaLI-3. PaliGemma is built on open components such as SigLIP vision model and Gemma language model, aiming to achieve best-in-class fine-tuning performance on a variety of visual language tasks. This includes captioning images and Short Video, visual question and answer, understanding text in images, object detection, and object segmentation.

We provide pre-training and fine-tuning checkpoints at multiple resolutions, as well as checkpoints specifically adjusted for mixed tasks for instant exploration.

To promote open exploration and research, PaliGemma is available through a variety of platforms and resources. Start exploring today with free options like Kaggle and Colab notebooks. Academic researchers seeking to push the boundaries of visual language research can also apply for Google Cloud points to support their work.

Start using PaliGemma today. You can find PaliGemma on GitHub, Hugging Face Models, Kaggle, Vertex AI Model Garden and ai.nvidia.com (accelerated using TensoRT-LLM), and easily integrates through JAX and Hugging Face Transformer. (Keras integration is coming soon) You can also interact with models through this Hugging Face Space.

Announcement of Gemma 2: Next generation performance and efficiency

We are pleased to announce that the next generation Gemma model, the Gemma 2, will be available soon. Gemma2 will be available in a new size that fits a wide range of artificial intelligence developer use cases and features a new architecture specifically designed for breakthrough performance and efficiency, with the following advantages:

Leading performance: The Gemma2 has 27 billion parameters, which is comparable to the Llama 370B in performance, but is only half the size of the Llama 370B. This breakthrough efficiency sets a new standard in the field of open models.

Reduce deployment costs: Gemma 2 ‘s efficient design requires less than half the amount of computing required by similar models. The 27B model is optimized to run on NVIDIA GPUs or efficiently on a single TPU host in Vertex AI, making it easier to deploy and more cost-effective for a wider range of users.

Multifunctional tuning tool chain: Gemma 2 will provide developers with powerful tuning capabilities across different platforms and tool ecosystems. From cloud-based solutions such as Google Cloud to popular community tools such as Axolotl, fine-tuning Gemma 2 will be easier than ever. In addition, seamless partner integration with Hugging Face and NVIDIA TensorRT-LLM, as well as our own JAX and Keras, ensures that you can optimize performance and deploy efficiently across a variety of hardware configurations.

Expand the responsible generative artificial intelligence toolkit

As a result, we are extending our Responsible Generative AI Toolkit to help developers conduct more powerful model evaluations by releasing an open source LLM comparator. The LLM Comparator is a new interactive and visual tool for performing effective parallel evaluations to assess the quality and security of model responses. To see how the LLM comparator works in action, check out our demonstration, which shows a comparison between Gemma 1.1 and Gemma 1.0.

We hope this tool will further advance the mission of the toolkit and help developers create artificial intelligence applications that are not only innovative, but also safe and responsible.

As we continue to expand the Gemma family of open models, we remain committed to creating a collaborative environment where cutting-edge artificial intelligence technology and responsible development go hand in hand. We are excited to see what you have built with these new tools and how we can together shape the future of artificial intelligence.

If you want to learn more, you can click on the link below the video.

Thank you for watching this video. If you like it, please subscribe and like it. thank

Original text:https://developers.googleblog.com/en/gemma-family-and-toolkit-expansion-io-2024/

Oil tubing: