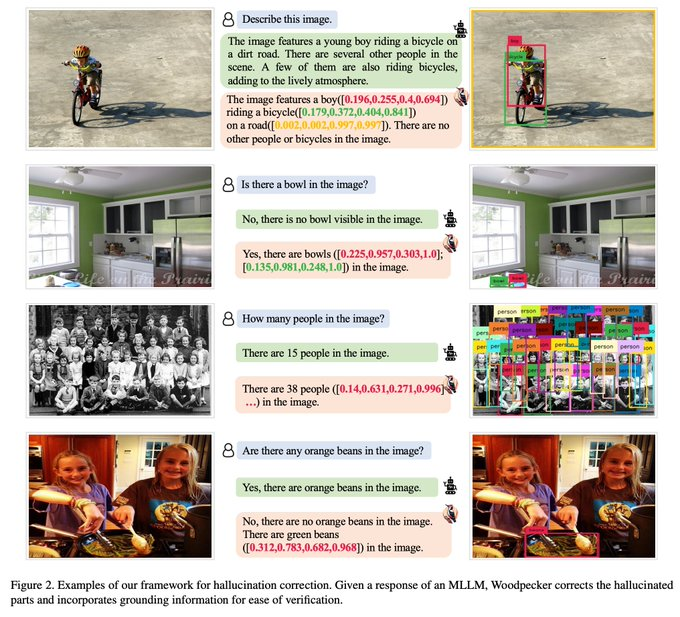

A large method to correct the “hallucination” of multimodal large language models.

Woodpecker does not rely on retraining models or using specific datasets, solving this problem in a way that requires no training. It is a post-processing step that can be applied to any existing multimodal large language model.

In the POPE benchmark, the accuracy improved by 30.66%/24.33% compared to the baseline MiniGPT-4 and mPLUG-Owl.

HuggingFace:https://huggingface.co/papers/2310.16045

Paper: https://arxiv.org/abs/2310.16045

GitHub:https://github.com/BradyFU/Woodpecker

Demo: https://60d1b7c6f5408b81d1.gradio.live

Working principle:

Woodpecker offers a comprehensive and effective approach to addressing hallucinations in multimodal large language models without the need for costly retraining or the use of specific datasets.

Woodpecker is a five-stage process, each with its own specific algorithms and techniques. For example, in the visual knowledge verification stage, convolutional neural networks (CNNs) may be used for image classification; In the key concept extraction stage, algorithms such as TF-IDF or Word2Vec may be used.

1. Key Concept Extraction: Extract key concepts from the generated text through natural language processing techniques such as Named Entity Recognition (NER) or keyword extraction.

2. Question Posing: Once key concepts are identified, Woodpecker automatically generates questions designed to verify whether these concepts are accurately represented in the visual content.

3. Visual knowledge verification: This step uses image recognition or object detection algorithms to answer the questions raised earlier. These algorithms are often based on deep learning and have been trained on large amounts of image data.

4. Visual Statement Generation: Based on the results of visual verification, generate a set of statements. These claims are used to describe the relationship between image content and text content.

5. Hallucination Correction: Finally, these visual statements are used to correct the generated text. If a piece of information in the text does not match the image, it will be modified or deleted.

Core Technology:

At its core, Woodpecker’s operations involve the integration of three distinct AI models, meticulously organized to address hallucinations. These three models are GPT-3.5 Turbo, Grounding DINO, and BLIP-2-FlanT5, which play a pivotal role as evaluators.

1. GPT-3.5 TurboGPT-3.5 Turbo

This is a large autoregressive language model primarily used to generate and understand natural language text. In Woodpecker, it may be used for key concept extraction and problem-building phases.

2. Grounding DINO dinosaur grounding

DINO is a model for self-supervised visual representation learning. In Woodpecker, it may be used in the visual knowledge verification phase to ensure that the generated text is consistent with the image content.

3、BLIP-2-FlanT5

This is a model for multimodal learning that can process both text and images. In Woodpecker, it may be used in the visual claim generation and hallucination correction stages.

These three models meticulously review and identify hallucinations, providing precise guidance on the model that needs correction, guiding it to align its output with its training data.

Through this approach to multi-model integration, Woodpecker is able to address hallucinations in multimodal large language models more comprehensively, providing more accurate and consistent outputs.